The scene

Contenido

A central concept: the scene

As a visualization application, 3D Slicer provides graphical representations of patient-specific data embedded in a GUI with configurable layouts that facilitate inspection, processing and interaction. Such patient-specific data is provided by a host of biomedical imaging modalities, across different spatial and temporal scales. 3D Slicer has a bias towards volumetric modalities, such as CT, MRI and PET due to their clinical relevance, but it is possible to integrate 1D and 2D signals, in addition to 3D, as well as their time varying counterparts. Morevover the magnitudes conveyed by the signals can be scalar, vector and tensors. Graphical representations are then provided by means of 3D, slice (2D) and chart (1D) specialized viewers.

Since 3D Slicer is largely built on top of the Visualization Toolkit (Vtk), it is very conveniet to read The Visualization Toolkit: An Object-Oriented Approach to 3D Graphics, 4th Edition, a.k.a. The VTK Textbook, which is the official reference guide for VTK and is freely available for download here.

The coordinate systems

In visualization and graphics, the scene consists of a virtual world in which objects (sometimes called actors), lights and cameras provide representations to be presented to the user.

Model coordinates

Each one of the different models that will take part of the scene have their own coordinate system. These model coordinate systems are dependent of each object itself and have their own coordinate axis: the origin of these axis and also their orientation may differ as they rely on how the data of such object is captured. The model coordinate systems can be either 3D or 2D.

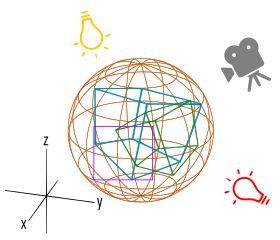

As seen in Figure 1 there are 4 different examples of objects:

- OBJECT #1: a sphere, with the origin of coordinates placed in the very center of the sphere

- OBJECT #2: a cube, with the origin or coordinates placed in one of the corners

- OBJECT #3: a cube as well, with the origin of coordinates placed in a different corner and also a different axis orientation

- OBJECT #4: a 2D object, with one X and Y coordinates

Now all of these 4 objects must have their coordinate systems unified in order to be displayed coherently toguether, wich leads us to the next step.

Object types

A rough classification of the different object types to be found in the scene is the following:

- Actors: the elements to be displayed. In our particular case, these actors come from different data sources as can be a CT scanner, MR, ultrasound, X-Ray, etc...

- Lights: the light sources that interact with the actors and allow them to be seen

- Cameras: the virtual devices that define what will be displayed

Note that there can be as many objects as needed, but at least there has to be one of each type in order to build a scene.

World coordinates

The world sets a reference system with respect to which actors, lights and cameras are positioned.

In Figure 2 there are the same 4 objects from the previous step, being displayed with the same coordinate system (axis are represented in black), the world one. Note that now the origin of the coordinate system, the (0,0,0) point, and the orientation of the axis doesn't match with any of the coordinate systems the objects had, as this world coordinate system is the common to them all. In this example, object#4, displayed in purple, was a 2D object that is now placed on a 3D coordinate system.

The scene setup is completed by adding a light source and a camera, all of them as well, referenced in the world coordinate system, as seen in Figure 3

Affine transformations relate cameras, objects and object components to the world reference system. They consist of sequences of translations, rotations, changes of scale and, eventually, planar shears, and can be represented using homogenous coordinates by 4x4 matrices. Interestignly, perspective and ortographic projections from 3D space onto the image plane of the viewing cameras can also be represented by 4x4 (or 4x3) matrices. In fact, all of them are cases of projective transformations, which can be represented by such 4x4 matrices. In fact, every element in the virtual world is represented with respect to the world reference system by sequences of 4x4 matrix transformations, possibly ordered in a hierarchy (eg.: to represent object internal components). For more information regarding coordinate transformations see [here].

Practical aplication

In our specific case we won't be working with abstract spheres, cubes or squares, we will be working with volumetric data acquired through different scanners and medical devices.

The coordinate systems used, when applied to human bodies, is the one called RAS. That term is an acronym formed by the name of the threee coordinate axis, with the unit vectors pointing in the following directions:

- Right-Left: corresponds to the saggital plane, perpendicular to the ground and separating the right R from the left L (shown in blue in Figure 4)

- Anterior-Posterior: corresponds to the coronal plane, perpendicular to the ground and separating the front A from the back P (shown in red in Figure 4)

- Superior-Inferior: corresponds to the axial plane, parallel to the ground and separates the head S from the feet I (shown in green in Figure 4)

This system is common, scanner-independent and patient-centered; allows the coherent integration and visualization of multiple images and data types in 2D and 3D viewers. The world reference basis in 3D Slicer corresponds to the patient-specific RAS and any data must be transformed to this system.

Volumetric image data is acquired and provided in the reference system of the raster scan IJK, also called column, row and slice (i and j are the coordinates for the column and row, and k is the one for the slice number). For their integration into 3D Slicer as objects or actors into the scene, it is necessary to provide the IJKtoRAS transformation matrix for every dataset. See Figure 5.

DESCRIPTION ABOUT THE SCANNER XYZ COORDINATES. See Figure 6.

View coordinates

The view coordinate system represents what is visible to the camera. This consists of a pair of x and y values, ranging between (-1,1), and a z depth coordinate; see Figure 7. The x, y values specify location in the image plane, while the z coordinate represents the distance, or range, from the camera. The display coordinate system uses the same basis as the view coordinate system, but instead of using negative one to one as the range, the coordinates are actual x, y pixel locations on the image plane. Factors such as the window’s size on the display determine how the view coordinate range of (-1,1) is mapped into pixel locations. This is also where the viewport comes into effect, so that different views can be integrated into the same window (see Chapter 3 and Fig. 3-14 of the VTK Textbook).

Display coordinates

Back to Previous considerations - Back to Main Page